Hidden Markov Models: When Clusters Have Memory

In the previous post, we clustered data into groups with K-Means and GMMs. Both methods assume observations are independent — each data point is analysed in isolation, with no regard for what came ...

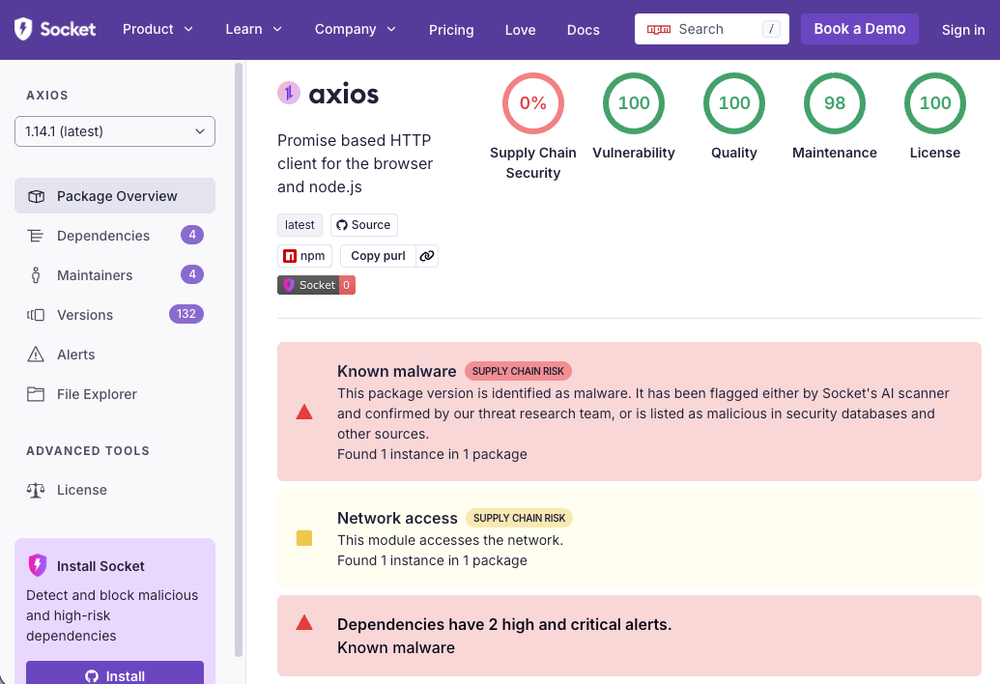

Source: DEV Community

In the previous post, we clustered data into groups with K-Means and GMMs. Both methods assume observations are independent — each data point is analysed in isolation, with no regard for what came before it. But time series data doesn't work that way. Today's stock market regime depends on yesterday's. A patient's health state today depends on their state last week. Weather tomorrow depends on weather today. When your clusters have memory, you need Hidden Markov Models. By the end of this post, you'll implement the Forward and Viterbi algorithms from scratch, detect stock market regimes with a Gaussian HMM, and understand why an HMM produces 5x fewer regime switches than K-Means on the same data. Markov Chains: Adding Memory to Randomness Before we add the "hidden" part, let's understand Markov chains. A Markov chain is a sequence of random states where the next state depends only on the current state — not the entire history. This is the Markov property. We define a chain with a trans