“Prompt Engineering Is Enough” Is Wrong – Here’s What I Had to Add

I used to believe the hype. I thought if I just wrote better prompts clearer instructions, few‑shot examples, chain‑of‑thought so that I could make any LLM do whatever I wanted. I spent weeks refin...

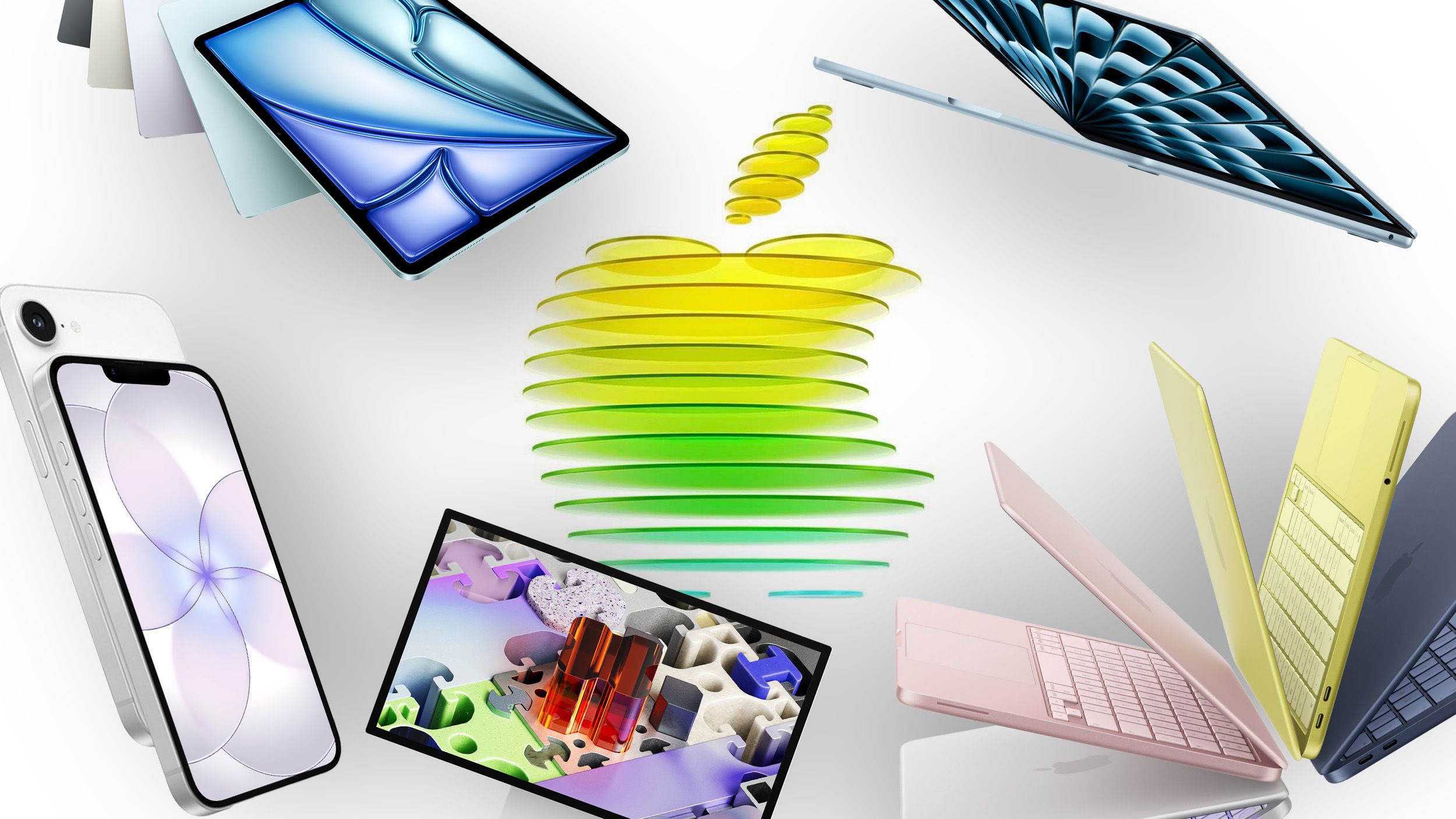

Source: DEV Community

I used to believe the hype. I thought if I just wrote better prompts clearer instructions, few‑shot examples, chain‑of‑thought so that I could make any LLM do whatever I wanted. I spent weeks refining prompts, tweaking wording, adding “think step by step” like it was magic. Then I tried to build something useful. I asked the model to check the weather and tell me if I needed an umbrella. The response was confident and completely wrong. It hallucinated the forecast based on its training cut‑off. No real data, just made‑up facts wrapped in perfect English. The prompt was excellent. The model was powerful. The result was useless. That’s when I realised the uncomfortable truth: prompt engineering is not enough. I went back and added one thing that actually fixed it tools. I gave the model a simple weather tool and forced it to use a basic OBSERVE → THINK → ACT loop. Nothing fancy. Just three steps every single time. Here’s what happened. First loop – OBSERVE The model receives my request: