TurboQuant: Redefining AI efficiency with extreme compression!

The pervasive adoption of large language models (LLMs) and other deep neural networks has ushered in a new era of artificial intelligence capabilities. However, the computational and memory demands...

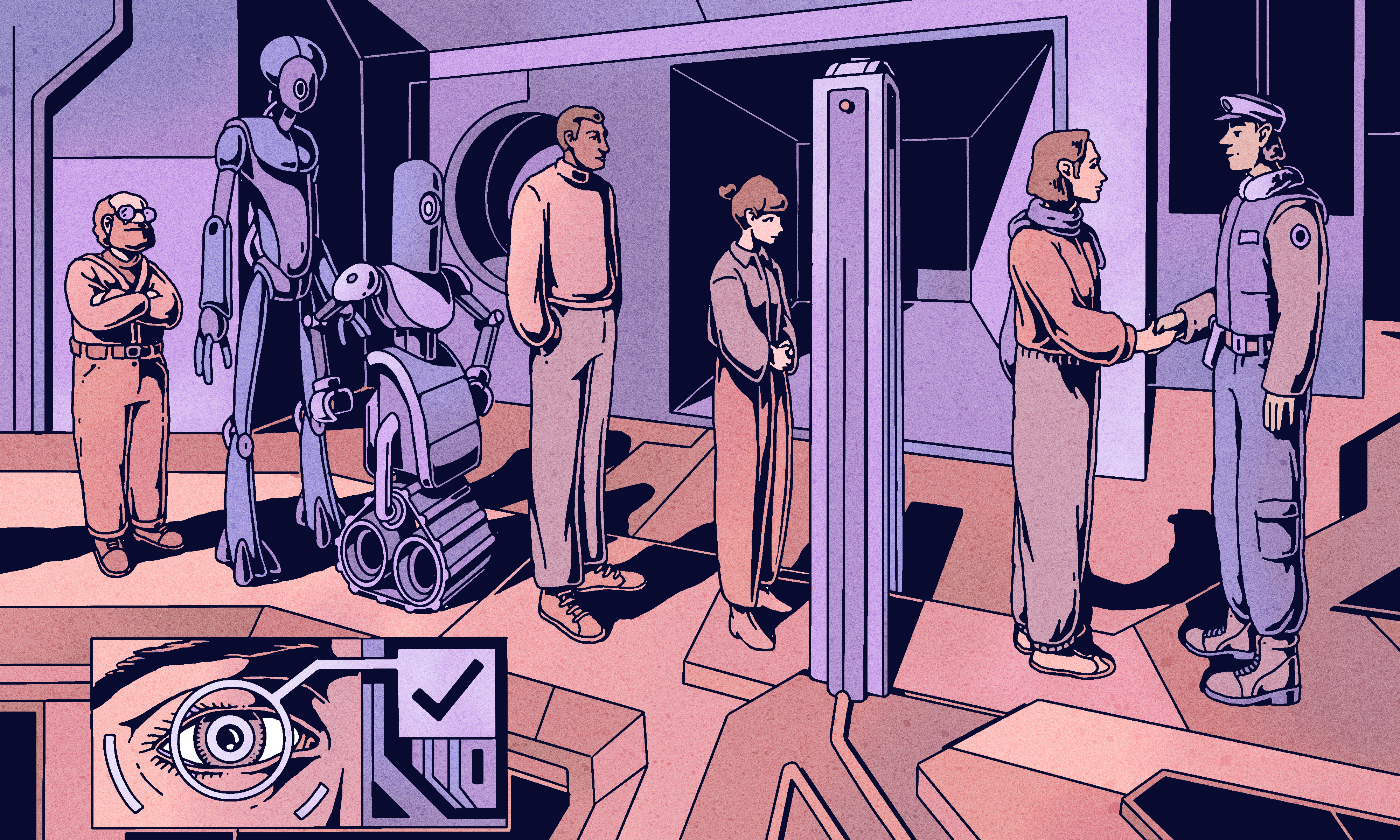

Source: DEV Community

The pervasive adoption of large language models (LLMs) and other deep neural networks has ushered in a new era of artificial intelligence capabilities. However, the computational and memory demands of these models present significant hurdles for widespread deployment, particularly in resource-constrained environments such as edge devices, mobile platforms, and embedded systems. High-precision floating-point representations (e.g., FP32, BF16) for model weights and activations consume substantial memory bandwidth and require considerable computational power, leading to increased inference latency and energy consumption. Model quantization has emerged as a critical technique to mitigate these issues. By reducing the numerical precision of model parameters, quantization can drastically decrease model size, accelerate inference, and lower power requirements. Standard quantization approaches typically target 8-bit integer (INT8) representations, with more aggressive methods exploring 4-bit i