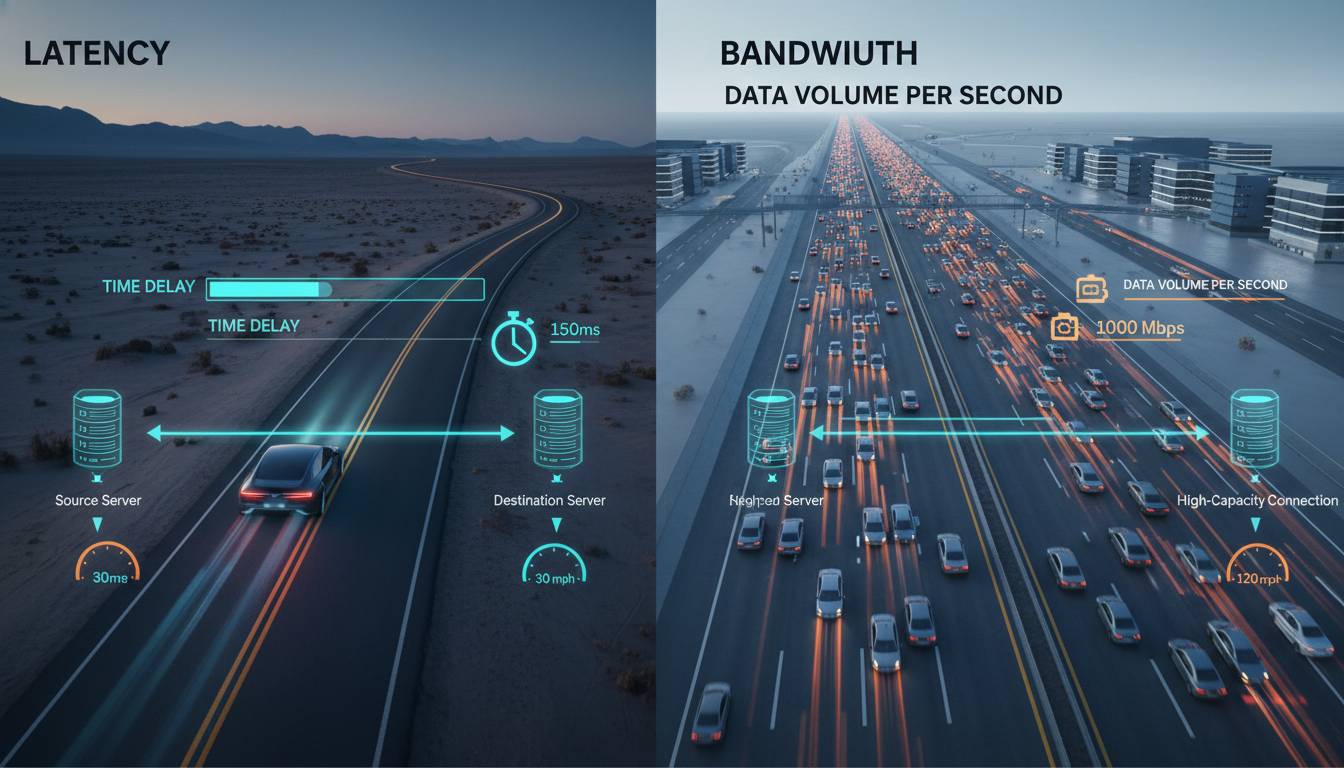

What Is Latency vs Bandwidth? Key Differences Explained

Latency is the time delay between a request and the response in a network, measured in milliseconds (ms). Bandwidth is the maximum amount of data that can travel through a network per second, measu...

Source: User-Interviews

Latency is the time delay between a request and the response in a network, measured in milliseconds (ms). Bandwidth is the maximum amount of data that can travel through a network per second, measured in bits per second (e.g., Mbps or Gbps). Lower latency means quicker responsiveness; higher bandwidth means more data can be transferred […]